If it feels today as if all the pieces in expertise is about AI, that’s as a result of it’s. And nowhere is that extra true than out there for computer memory. Demand, and profitability, for the kind of DRAM used to feed GPUs and different accelerators in AI data centers is so big that it’s diverting away provide of reminiscence for different makes use of and inflicting costs to skyrocket. In line with Counterpoint Research, DRAM costs have risen 80-90 precent up to now this quarter.

The biggest AI hardware firms say they’ve secured their chips out so far as 2028, however that leaves everyone else—makers of PCs, client gizmos, and all the pieces else that should briefly retailer a billion bits—scrambling to take care of scarce provide and inflated costs.

How did the electronics business get into this mess, and extra importantly, how will it get out? IEEE Spectrum requested economists and reminiscence consultants to elucidate. They are saying as we speak’s scenario is the results of a collision between the DRAM business’s historic increase and bust cycle and an AI {hardware} infrastructure build-out that’s with out precedent in its scale. And, barring some main collapse within the AI sector, it can take years for brand new capability and new expertise to carry provide according to demand. Costs would possibly keep excessive even then.

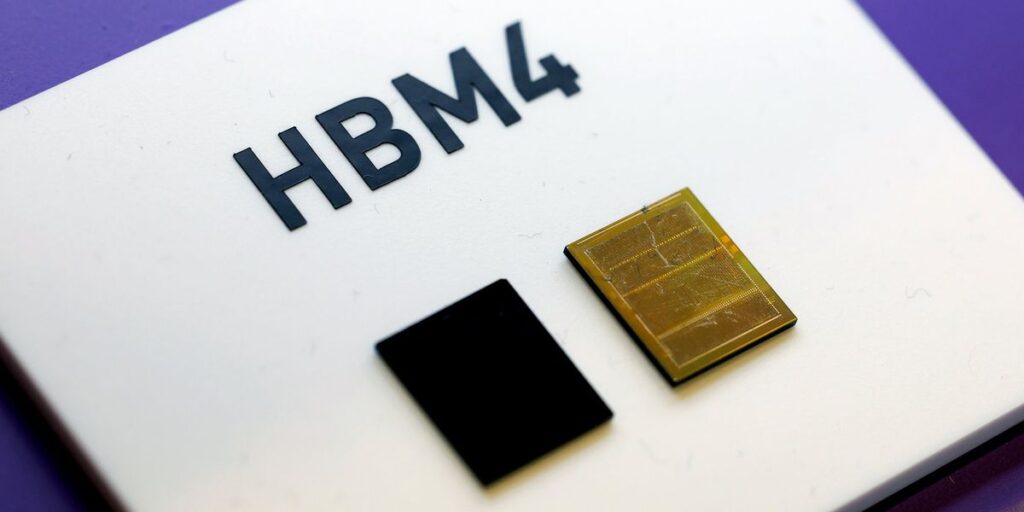

To know each ends of the story, it is advisable know the primary perpetrator within the provide and demand swing, high-bandwidth reminiscence, or HBM.

What’s HBM?

HBM is the DRAM business’s try to short-circuit the slowing tempo of Moore’s Regulation by utilizing 3D chip packaging expertise. Every HBM chip is made up of as many as 12 thinned-down DRAM chips referred to as dies. Every die incorporates various vertical connections referred to as by silicon vias (TSVs). The dies are piled atop one another and related by arrays of microscopic solder balls aligned to the TSVs. This DRAM tower—nicely, at about 750 micrometers thick, it’s extra of a brutalist office-block than a tower—is then stacked atop what’s referred to as the bottom die, which shuttles bits between the reminiscence dies and the processor.

This advanced piece of expertise is then set inside a millimeter of a GPU or different AI accelerator, to which it’s linked by as many as 2,048 micrometer-scale connections. HBMs are hooked up on two sides of the processor, and the GPU and reminiscence are packaged collectively as a single unit.

The concept behind such a good, highly-connected squeeze with the GPU is to knock down what’s referred to as the memory wall. That’s the barrier in power and time of bringing the terabytes per second of information wanted to run large language models into the GPU. Memory bandwidth is a key limiter to how briskly LLMs can run.

As a expertise, HBM has been round for more than 10 years, and DRAM makers have been busy boosting its functionality.

As the dimensions of AI models has grown, so has HBM’s significance to the GPU. However that’s come at a price. SemiAnalysis estimates that HBM typically prices thrice as a lot as different forms of reminiscence and constitutes 50 p.c or extra of the price of the packaged GPU.

Origins of the memory chip scarcity

Reminiscence and storage business watchers agree that DRAM is a extremely cyclical business with big booms and devastating busts. With new fabs costing US $15 billion or extra, corporations are extraordinarily reluctant to develop and should solely have the money to take action throughout increase occasions, explains Thomas Coughlin, a storage and reminiscence knowledgeable and president of Coughlin Associates. However constructing such a fab and getting it up and operating can take 18 months or extra, virtually making certain that new capability arrives nicely previous the preliminary surge in demand, flooding the market and miserable costs.

The origins of as we speak’s cycle, says Coughlin, go all the way in which again to the chip supply panic surrounding the COVID-19 pandemic . To keep away from supply-chain stumbles and assist the fast shift to remote work, hyperscalers—knowledge heart giants like Amazon, Google, and Microsoft—purchased up big inventories of reminiscence and storage, boosting costs, he notes.

However then provide grew to become extra common and knowledge heart enlargement fell off in 2022, inflicting reminiscence and storage costs to plummet. This recession continued into 2023, and even resulted in large reminiscence and storage firms equivalent to Samsung reducing manufacturing by 50 p.c to attempt to preserve costs from going beneath the prices of producing, says Coughlin. It was a uncommon and pretty determined transfer, as a result of firms usually need to run crops at full capability simply to earn again their worth.

After a restoration started in late 2023, “all of the reminiscence and storage firms had been very cautious of accelerating their manufacturing capability once more,” says Coughlin. “Thus there was little or no funding in new manufacturing capability in 2024 and thru most of 2025.”

The AI knowledge heart increase

That lack of latest funding is colliding headlong with an enormous increase in demand from new knowledge facilities. Globally, there are nearly 2,000 new data centers both deliberate or beneath development proper now, in line with Knowledge Middle Map. In the event that they’re all constructed, it will symbolize a 20 p.c soar within the world provide, which stands at round 9,000 amenities now.

If the present build-out continues at tempo, McKinsey predicts firms will spend $7 trillion by 2030, with the majority of that—$5.2 trillion—going to AI-focused knowledge facilities. Of that chunk, $3.3 billion will go towards servers, data storage, and community tools, the agency predicts.

The largest beneficiary up to now of the AI knowledge heart increase is certainly GPU-maker Nvidia. Income for its knowledge heart enterprise went from barely a billion in the final quarter of 2019 to $51 billion in the quarter that ended in October 2025. Over this era, its server GPUs have demanded not simply an increasing number of gigabytes of DRAM however an growing variety of DRAM chips. The just lately launched B300 makes use of eight HBM chips, every of which is a stack of 12 DRAM dies. Rivals’ use of HBM has largely mirrored Nvidia’s. AMD’s MI350 GPU, for instance, additionally makes use of eight, 12-die chips.

With a lot demand, an growing fraction of the income for DRAM makers comes from HBM. Micron—the quantity three producer behind SK Hynix and Samsung—reported that HBM and other cloud-related memory went from being 17 p.c of its DRAM income in 2023 to almost 50 p.c in 2025.

Micron predicts the entire marketplace for HBM will develop from $35 billion in 2025 to $100 billion by 2028—a determine bigger than your complete DRAM market in 2024, CEO Sanjay Mehrotra told analysts in December. It’s reaching that determine two years sooner than Micron had beforehand anticipated. Throughout the business, demand will outstrip provide “considerably… for the foreseeable future,” he stated.

Future DRAM provide and expertise

“There are two methods to handle provide points with DRAM: with innovation or with constructing extra fabs,” explains Mina Kim, an economist with the Mkecon Insights. “As DRAM scaling has grow to be harder, the business has turned to superior packaging… which is simply utilizing extra DRAM.”

Micron, Samsung, and SK Hynix mixed make up the overwhelming majority of the reminiscence and storage markets, and all three have new fabs and amenities within the works. Nevertheless, these are unlikely to contribute meaningfully to bringing down costs.

Micron is within the strategy of building an HBM fab in Singapore that ought to be in manufacturing in 2027. And it’s retooling a fab it bought from PSMC in Taiwan that may start manufacturing within the second half of 2027. Final month, Micron broke ground on what will probably be a DRAM fab advanced in Onondaga County, N.Y. It is not going to be in full manufacturing till 2030.

Samsung plans to start producing at a brand new plant in Pyeongtaek, South Korea in 2028.

SK Hynix is constructing HBM and packaging amenities in West Lafayette, Indiana set to start manufacturing by the top of 2028, and an HBM fab it’s building in Cheongju ought to be full in 2027.

Talking of his sense of the DRAM market, Intel CEO Lip-Bu Tan informed attendees on the Cisco AI Summit final week: “There’s no aid till 2028.”

With these expansions unable to contribute for a number of years, different components will probably be wanted to extend provide. “Aid will come from a mix of incremental capability expansions by current DRAM leaders, yield enhancements in advanced packaging, and a broader diversification of provide chains,” says Shawn DuBravac , chief economist for the Global Electronics Association (previously the IPC). “New fabs will assist on the margin, however the quicker positive factors will come from course of studying, higher [DRAM] stacking effectivity, and tighter coordination between reminiscence suppliers and AI chip designers.”

So, will costs come down as soon as a few of these new crops come on line? Don’t wager on it. “Normally, economists discover that costs come down rather more slowly and reluctantly than they go up. DRAM as we speak is unlikely to be an exception to this basic statement, particularly given the insatiable demand for compute,” says Kim.

Within the meantime, applied sciences are within the works that might make HBM an excellent greater client of silicon. The usual for HBM4 can accommodate 16 stacked DRAM dies, although as we speak’s chips solely use 12 dies. Attending to 16 has lots to do with the chip stacking expertise. Conducting warmth by the HBM “layer cake” of silicon, solder, and assist materials is a key limiter to going increased and in repositioning HBM inside the package to get much more bandwidth.

SK Hynix claims a warmth conduction benefit by a producing course of referred to as superior MR-MUF (mass reflow molded underfill). Additional out, another chip stacking expertise referred to as hybrid bonding might assist warmth conduction by lowering the die-to-die vertical distance primarily to zero. In 2024, researchers at Samsung proved they might produce a 16-high stack with hybrid bonding, and so they urged that 20 dies was not out of reach.

From Your Website Articles

Associated Articles Across the Internet