Facial recognition know-how (FRT) dates again 60 years. Simply over a decade in the past, deep-learning strategies tipped the know-how into extra helpful—and menacing—territory. Now, retailers, your neighbors, and law enforcement are all storing your face and build up a fragmentary photograph album of your life.

But the story these photographs can inform inevitably has errors. FRT makers, like these of any diagnostic know-how, should steadiness two sorts of errors: false positives and false negatives. There are three potential outcomes.

In best-case situations—corresponding to evaluating somebody’s passport photograph to a photograph taken by a border agent—false-negative charges are around two in 1,000 and false positives are less than one in 1 million.

Within the uncommon occasion you’re a type of false negatives, a border agent would possibly ask you to indicate your passport and take a second have a look at your face. However as folks ask extra of the know-how, extra formidable purposes might result in extra catastrophic errors. Let’s say that police are trying to find a suspect, they usually’re evaluating a picture taken with a safety digicam with a earlier “mug shot” of the suspect.

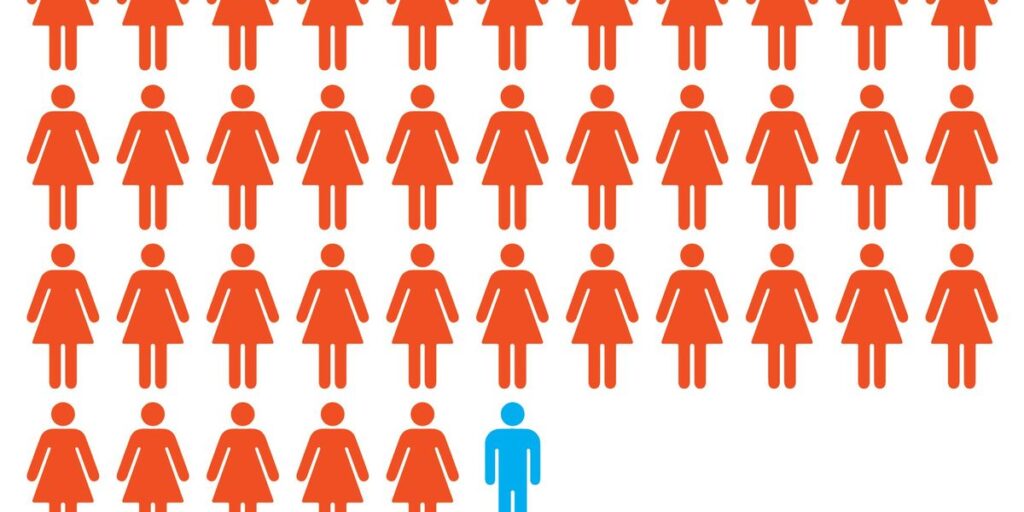

Coaching-data composition, variations in how sensors detect faces, and intrinsic variations between teams, corresponding to age, all have an effect on an algorithm’s efficiency. The United Kingdom estimated that its FRT uncovered some teams, corresponding to girls and darker-skinned folks, to dangers of misidentification as excessive as two orders of magnitude higher than it did to others.

Much less clear pictures are more durable for FRT to course of.iStock

What occurs with photographs of people that aren’t cooperating, or distributors that prepare algorithms on biased datasets, or subject brokers who demand a swift match from an enormous dataset? Right here, issues get murky.

Contemplate a busy commerce honest utilizing FRT to verify attendees towards a database, or gallery, of pictures of the ten,000 registrants, for instance. Even at 99.9 p.c accuracy you’ll get a few dozen false positives or negatives, which can be well worth the trade-off to the honest organizers. But when police begin utilizing one thing like that throughout a metropolis of 1 million folks, the variety of potential victims of mistaken id rises, as do the stakes.

What if we ask FRT to inform us if the federal government has ever recorded and saved a picture of a given individual? That’s what U.S. Immigration and Customs Enforcement agents have done since June 2025, utilizing the Cell Fortify app. The company carried out greater than 100,000 FRT searches within the first six months. The dimensions of the potential gallery is at the least 1.2 billion images.

At that measurement, assuming even best-case pictures, the system is prone to return round 1 million false matches, however at a charge at the least 10 occasions as excessive for darker-skinned folks, relying on the subgroup.

Accountable use of this highly effective know-how would contain unbiased id checks, a number of sources of knowledge, and a transparent understanding of the error thresholds, says pc scientist Erik Learned-Miller of the College of Massachusetts Amherst: “The care we take in deploying such programs needs to be proportional to the stakes.”

From Your Web site Articles

Associated Articles Across the Internet